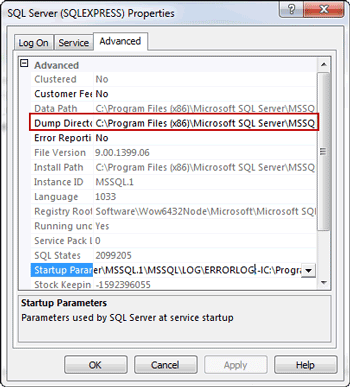

Mar 4, 2010 - If your LOG folder is located on a disk drive that doesnt have enough space left on it, you can change that value to a location on a drive that has enough space. So, since SQL 2005, you can change the default dump directory inside Configuration Manager. Jul 11, 2017 - The instructions on creating a SQL Server dump from 2010 are still. I'm running SQL Server 2016, so the path is C: Program Files Microsoft.

Because Data Pump is server-based rather than client-based, dump files, log files, and SQL files are accessed relative to server-based directory paths. Data Pump requires that directory paths be specified as directory objects.

A directory object maps a name to a directory path on the file system. DBAs must ensure that only approved users are allowed access to the directory object associated with the directory path. The following example shows a SQL statement that creates a directory object named dpumpdir1 that is mapped to a directory located at /usr/apps/datafiles. SQL CREATE DIRECTORY dpumpdir1 AS '/usr/apps/datafiles'; The reason that a directory object is required is to ensure data security and integrity. For example:. If you were allowed to specify a directory path location for an input file, then you might be able to read data that the server has access to, but to which you should not.

If you were allowed to specify a directory path location for an output file, then the server might overwrite a file that you might not normally have privileges to delete. On UNIX and Windows operating systems, a default directory object, DATAPUMPDIR, is created at database creation or whenever the database dictionary is upgraded. By default, it is available only to privileged users. (The user SYSTEM has read and write access to the DATAPUMPDIR directory, by default.) If you are not a privileged user, then before you can run Data Pump Export or Data Pump Import, a directory object must be created by a database administrator (DBA) or by any user with the CREATE ANY DIRECTORY privilege.

After a directory is created, the user creating the directory object must grant READ or WRITE permission on the directory to other users. For example, to allow the Oracle database to read and write files on behalf of user hr in the directory named by dpumpdir1, the DBA must execute the following command: SQL GRANT READ, WRITE ON DIRECTORY dpumpdir1 TO hr; Note that READ or WRITE permission to a directory object only means that the Oracle database can read or write files in the corresponding directory on your behalf. You are not given direct access to those files outside of the Oracle database unless you have the appropriate operating system privileges. Similarly, the Oracle database requires permission from the operating system to read and write files in the directories. Data Pump Export and Import use the following order of precedence to determine a file's location:. If a directory object is specified as part of the file specification, then the location specified by that directory object is used.

(The directory object must be separated from the file name by a colon.). If a directory object is not specified as part of the file specification, then the directory object named by the DIRECTORY parameter is used.

If a directory object is not specified as part of the file specification, and if no directory object is named by the DIRECTORY parameter, then the value of the environment variable, DATAPUMPDIR, is used. This environment variable is defined using operating system commands on the client system where the Data Pump Export and Import utilities are run. The value assigned to this client-based environment variable must be the name of a server-based directory object, which must first be created on the server system by a DBA. For example, the following SQL statement creates a directory object on the server system.

The name of the directory object is DUMPFILES1, and it is located at '/usr/apps/dumpfiles1'. SQL CREATE DIRECTORY DUMPFILES1 AS '/usr/apps/dumpfiles1'; Then, a user on a UNIX-based client system using csh can assign the value DUMPFILES1 to the environment variable DATAPUMPDIR. The DIRECTORY parameter can then be omitted from the command line. The dump file employees.dmp, and the log file export.log, are written to '/usr/apps/dumpfiles1'.%setenv DATAPUMPDIR DUMPFILES1%expdp hr TABLES=employees DUMPFILE=employees.dmp.

If none of the previous three conditions yields a directory object and you are a privileged user, then Data Pump attempts to use the value of the default server-based directory object, DATAPUMPDIR. This directory object is automatically created at database creation or when the database dictionary is upgraded. You can use the following SQL query to see the path definition for DATAPUMPDIR: SQL SELECT directoryname, directorypath FROM dbadirectories 2 WHERE directoryname='DATAPUMPDIR'; If you are not a privileged user, then access to the DATAPUMPDIR directory object must have previously been granted to you by a DBA.

Do not confuse the default DATAPUMPDIR directory object with the client-based environment variable of the same name. Keep the following considerations in mind when working in an Oracle RAC environment. To use Data Pump or external tables in an Oracle RAC configuration, you must ensure that the directory object path is on a cluster-wide file system. The directory object must point to shared physical storage that is visible to, and accessible from, all instances where Data Pump and/or external tables processes may run. The default Data Pump behavior is that worker processes can run on any instance in an Oracle RAC configuration.

Therefore, workers on those Oracle RAC instances must have physical access to the location defined by the directory object, such as shared storage media. If the configuration does not have shared storage for this purpose, but you still require parallelism, then you can use the CLUSTER=NO parameter to constrain all worker processes to the instance where the Data Pump job was started. Under certain circumstances, Data Pump uses parallel query slaves to load or unload data.

In an Oracle RAC environment, Data Pump does not control where these slaves run, and they may run on other instances in the Oracle RAC, regardless of what is specified for CLUSTER and SERVICENAME for the Data Pump job. Controls for parallel query operations are independent of Data Pump. When parallel query slaves run on other instances as part of a Data Pump job, they also require access to the physical storage of the dump file set. If you use Data Pump Export or Import with Oracle Automatic Storage Management (Oracle ASM) enabled, then you must define the directory object used for the dump file so that the Oracle ASM disk group name is used (instead of an operating system directory path). A separate directory object, which points to an operating system directory path, should be used for the log file.

Recently Microsoft released a preview version of the SQL Server Diagnostics extension to SSMS. You can read more about it. I downloaded it from and you can see the results below. If you install the extension while SSMS is up and running, you’ll have to stop and restart SSMS in order to see the new menu option. SQL Server Diagnostics extension to SSMS. If you want to see how the tool works, you’ll need to either wait for a dump or forcibly create one.

The instructions on creating a SQL Server dump from 2010 are still current and available. The easiest way to force the creation of a dump file is from within Task Manager, but I wanted to direct the output to a location of my choosing, so I used sqldumper. Creating a dump file from Task Manager. You’ll need to use an Administrative Command Prompt to run sqldumper. The exact path depends on which version of SQL Server you are running.

I’m running SQL Server 2016, so the path is C: Program Files Microsoft SQL Server 130 Shared where 130 corresponds to compatibility level of SQL Server 2016. SQL Server 2014 is 120 and so on. Output from sqldumper /? So you can see the command line options. You need the pid of sqlservr.exe to run sqldumper. Go to the Details tab in Task Manager.

My pid was 3892. Yours will be something else. Running sqldumper.exe resulted in the following output: Figure 5. Output from creating a dump using sqldumper. I selected the Analyze Dumps option. This is a cloud based service, so be prepared to wait as your large dump file is sent to Microsoft.

Notice that you have to pick an Azure data center as your dump’s repository. Can you upload this outside the country where your server is? Do your corporate policies allow a dump file to be shared outside your company? Uploading dump file for analysis.

After the upload finished, the analysis took several minutes. You have to consider corporate policies and government laws on submitting corporate data to the cloud. If it is allowable to upload your dump to an external cloud server, you might find this new service useful. Comments No Comments Anonymous comments are disabled About John Paul Cook John Paul Cook is a database and Azure specialist in Houston. He previously worked as a Data Platform Solution Architect in Microsoft's Houston office. Prior to joining Microsoft, he was a SQL Server MVP. He is experienced in SQL Server and Oracle database application design, development, and implementation.

He has spoken at many conferences including Microsoft TechEd and the SQL PASS Summit. He has worked in oil and gas, financial, manufacturing, and healthcare industries. John is also a registered nurse recently completed the education to become a psychiatric nurse practitioner.

Contributing author to SQL Server MVP Deep Dives and SQL Server MVP Deep Dives Volume 2.